archive.pl 2.0 released

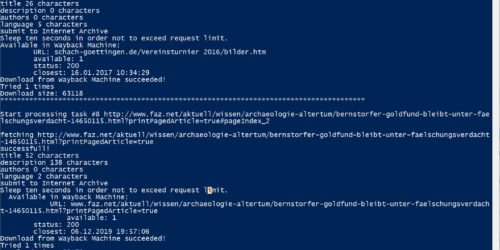

Today I finally released the latest build of my archive.pl script. Due to its massive changes I decided to increase the main version, although there is no incompatibility to prior version calls.

The main purpose of archive.pl is to store sets of URLs in the Internet Archive and store an index of them as Atom feed, HTML page or WordPress post. IA itself declared not to obey robots.txt since rules for crawlers, say of search engines, do not make much sense in archiving. Also archive.pl now disregards robots.txt by default. The new -r switch changes this.

The biggest change is that archive.pl now can extract all links of the pages and store them in the IA but without downloading and analyzing them itself. In the prior version we used IA’s new web save form for this. But apparently IA experienced a massive load and subsequently restricted it to logged-in users having JavaScript enabled. Therefore we must now do it ourselfs. The new -l switch turns it on. But be carefull: to my experience news sites and blogs often contain a huge amount of them and it will take hours to archive them all if the URL list is too long.

The other improvements are of minor nature. Especially the annoying error saying that the index post cannot get stored in he WordPress database should be removed now. Head over to the project page for obtaining the script and further advice.